Task intelligence is not enough

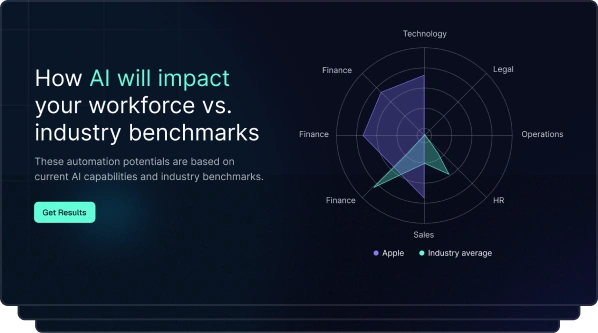

40% of enterprise applications will feature task-specific AI agents by 2026, up from less than 5% in 2025. That's Gartner's projection. The message is clear: AI is reshaping work at the task level, not the job level.

The workforce technology market has responded. Vendors across the board now claim task intelligence as a core capability, promising to decompose roles into tasks, score each task for AI potential, and map the path to workforce transformation. It sounds compelling. It's also incomplete.

Task intelligence tells you what work people do, but not what skills that work requires, what it costs, or what happens when AI absorbs 30% of those tasks. For that, you need something bigger: work intelligence.

This piece explains why task intelligence matters and where it falls short, and why TechWolf deliberately chose to build work intelligence as a unified data layer connecting tasks, skills, and roles into a single source of truth.

The rise of task intelligence

Task intelligence is having its moment. The World Economic Forum's Future of Jobs Report 2025 projects 170 million new jobs created by 2030, with 92 million displaced. That's a net gain of 78 million roles, but the composition of those roles will look nothing like today.

AI changes tasks, not jobs. McKinsey research estimates that 57% of US work hours are technically automatable. Not 57% of jobs. 57% of the discrete tasks that make up those jobs.

That distinction matters. A software engineer's role contains tasks ranging from code review (highly automatable) to stakeholder alignment (entirely human). Treating that role as a single unit misses the point.

Major vendors now offer task-level analysis as a headline feature, decomposing roles into discrete tasks, assessing each for AI automation or augmentation potential, and producing heat maps of workforce transformation readiness. It's a meaningful step forward, but it raises a question the market isn't asking loudly enough: is mapping tasks sufficient on its own?

What task intelligence actually does

Task intelligence decomposes roles into their component tasks, then analyses each task for its relationship with AI. Can this task be automated? Augmented? Does it require human judgment that no model can replicate?

This is a meaningful evolution from job-level workforce planning. Traditional approaches treat roles as monolithic units: a "financial analyst" is a financial analyst. Task intelligence reveals that the same role might contain 25 discrete tasks, each with a different automation profile.

Some of those tasks, such as data consolidation and report formatting, are prime candidates for AI automation. Others, like regulatory judgment calls and client relationship management, remain deeply human. McKinsey's analysis found that over 70% of skills used in the workforce are relevant across both automatable and non-automatable tasks. The boundaries are blurred, not clean.

This is where task intelligence earns its value. It replaces gut-feel automation assessments with structured, data-driven analysis, allowing enterprise leaders to answer a specific question: which tasks in which roles are most affected by AI?

But answering that question is the starting point, not the finish line. Knowing that 40% of a financial analyst's tasks have automation potential is useful, but what skills does the remaining 60% require? How do you redeploy people whose tasks shift? What's the financial impact? That's where real decisions get made.

Three blind spots of standalone task intelligence

Task intelligence, on its own, has three critical gaps. These aren't flaws in the technology; they're inherent limitations of treating task data as a standalone category.

1. No skills context. Knowing what tasks people perform without knowing what skills those tasks require leaves you with half the picture. A task map might tell you that an operations manager spends 15% of their time on supply chain optimisation, but it won't tell you whether that person can take on the forecasting tasks AI is about to open up. Without a skills layer, task data remains descriptive when it needs to be prescriptive.

2. No financial translation. Most task intelligence solutions produce heat maps and potential scores showing which tasks could be automated, yet rarely translate that potential into dollars: cost per task-hour, redeployment value, and ROI of augmentation versus full automation. Without financial translation, task intelligence remains an academic exercise that informs strategy conversations but doesn't fund them.

3. No integration. Task data that lives in a standalone tool is another data island. People analytics leaders already manage 10 or more HR systems and need task-level insights embedded in their existing analytics stack, connected to their HRIS, ATS, and workforce planning tools. Siloed task data creates more work, not less.

These three gaps share a common root: task intelligence treats tasks as the end point, when the real value starts where task data connects to skills, financial outcomes, and the enterprise systems where workforce decisions actually happen.

From task intelligence to work intelligence: why we built a bigger frame

When TechWolf set out to build the intelligence layer for workforce decisions, we faced a choice. We could have built a task intelligence product. The market was heading there, and the demand was clear.

We chose differently. We built work intelligence.

The reasoning was straightforward. Task data is essential, but it's one dimension of a three-dimensional problem. Understanding what work people do (tasks) matters. Understanding what capabilities that work requires (skills) matters. Understanding how those capabilities map to roles, costs, and business outcomes matters. All three, connected.

Work intelligence is that connection. It maps tasks, skills, and roles in a single data layer, inferring everything from the enterprise data organisations already have: HRIS, ATS, LMS, and collaboration tools. No manual cataloguing. No 18-month taxonomy projects.

The inference model analyses existing HR data to produce task-level and skill-level intelligence simultaneously, mapping an average of 25 tasks per role with 82% baseline inference accuracy, connected to an ontology of over 35,000 skills. Each task is scored for AI potential using the Stanford Human Agency Scale: a science-backed framework that distinguishes between automation, augmentation, and human-only tasks.

This isn't a semantic distinction but an architectural one. Solutions that map tasks in isolation force enterprises to build the skills connection themselves, while work intelligence delivers that connection out of the box, integrated with Workday, SAP SuccessFactors, ServiceNow, and since March 2026, Visier, where task-level and skill-level insights are embedded directly into workforce analytics dashboards.

What work intelligence looks like in practice

The difference between task intelligence and work intelligence becomes concrete when you see how enterprises use the connected data.

Translating tasks to dollar value. A global insurance company used work intelligence to build a task cost estimation model, mapping tasks to time allocation and total employment cost to translate abstract task data into a dollar value per task. The result was a clear financial case for which tasks to automate, which to augment, and which to leave untouched: concrete numbers the CFO could act on.

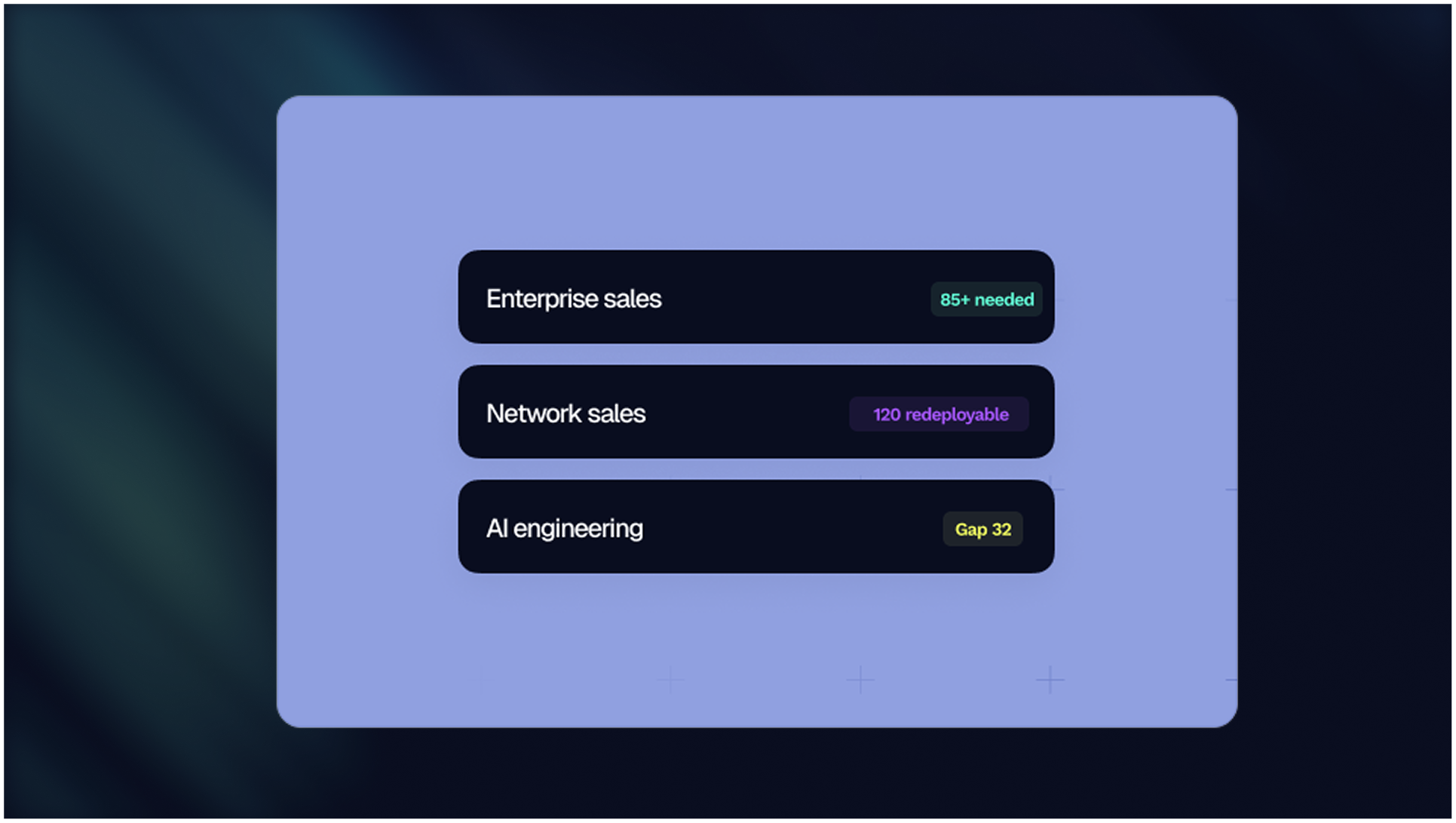

Unlocking revenue through workforce redeployment. A Fortune 500 life sciences company mapped 25 tasks per role across its organisation, with 82% inference accuracy. Connecting task data to skills intelligence revealed redeployment opportunities invisible at the job-title level. The impact: over $10 million in previously blocked backlog revenue, unlocked by moving the right people to the right work based on task-level and skill-level data.

Shifting hiring strategy through task analysis. TechWolf's own professional services team ran an internal pilot, connecting project management tools to work intelligence to map daily tasks. The analysis revealed that overly technical skills were becoming less critical, leading to one senior role being redesigned into two junior roles with different task profiles. The saving: $76,000 in a single team, with better alignment between tasks and capabilities.

In each case, the value came not from task mapping alone but from connecting task data to skills, costs, and the enterprise systems where decisions happen.

What to look for in a work intelligence solution

If your organisation is evaluating task intelligence or work intelligence capabilities, 4 criteria separate the solutions that deliver lasting value from those that create another data project.

1. Inference capability. Does the solution require manual task cataloguing, or does it infer task and skill data from your existing enterprise systems? Manual approaches are slow, expensive, and outdated before they ship. Inference at scale delivers data in weeks, not months.

2. Skills-to-tasks mapping. Does it connect task data to a comprehensive skills ontology? Task mapping without skills context is a map without a legend. Look for a unified data layer that links tasks, skills, and roles in a single model.

3. Enterprise integration. Does it embed into your existing analytics stack? Task data in a standalone dashboard is another silo. The value multiplies when work intelligence feeds directly into your HRIS, ATS, and workforce planning tools.

4. Speed to value. How long from contract to first insights? The best solutions deliver actionable work intelligence within weeks. If the timeline stretches to quarters, the data will be stale before you use it.

Task intelligence was the right starting point for the market, forcing the conversation from jobs to tasks. But the next step is clear: connecting tasks to skills, costs, and the enterprise systems that drive workforce decisions. That's work intelligence, and it's where the category is heading.

Explore TechWolf's work intelligence to see how leading enterprises are connecting task data to skills, costs, and business outcomes.

Blog

From guides to whitepapers, we’ve got everything you need to master job-to-skill profiles.

The friction layer: Where structural AI pressure actually lands

McKinsey x TechWolf on AI, agents, and the next decade of work

.svg.png)