Why most skills assessments produce data nobody trusts

Self-assessments are the most common method organisations use to map workforce skills. They also have an approximately 0.29 correlation with actual job performance, according to Dunning-Kruger research on self-assessment accuracy. Better than a coin flip, but not by much.

Workera research confirms the pattern: seven out of ten employees inaccurately assess their own skills. Some overestimate and some underestimate, but the net result is the same: the skills data sitting in your HR system right now is probably wrong.

This matters because every downstream decision depends on it. Internal mobility, workforce planning, L&D investment, succession planning: all of it runs on skills data. When that data is unreliable, you're not making informed decisions. You are making expensive guesses.

The problem is not execution. HR teams are not doing skills assessment badly. The problem is structural: the most common assessment methods cannot produce reliable data at enterprise scale. Here's why, and what the alternative looks like.

The four assessment methods every organisation defaults to

Most organisations use some combination of four methods to assess workforce skills. Each has a long history. Each feels intuitive. And each breaks down the moment you try to apply it across thousands of employees.

1. Self-assessment

Employees rate their own proficiency, typically on a 1-5 scale. It's the most widely used approach because it's the easiest to deploy. You send a survey, collect responses, and compile the data. The problem is that what you collect is perception, not reality.

2. Manager evaluation

Managers assess their direct reports based on observed performance. This works in small teams where the manager sees the work daily. At enterprise scale, a manager with 15 direct reports cannot reliably evaluate skills they don't use themselves. Recency bias dominates: the last project colours everything.

3. Competency frameworks

HR teams build structured models defining what skills each role requires. These frameworks take months to create. Building a comprehensive skills library can take two years. By the time you finish, the skills landscape has moved.

4. Skills surveys and periodic audits

With annual or biannual campaigns where the entire workforce reports their capabilities, response rates typically range between 30% and 60%. The data represents a snapshot in time, and that snapshot starts ageing the moment it is collected.

According to Mercer's 2025 Global Talent Trends report, only 8% of organisations use AI-driven skills assessment methods. The remaining 92% rely on some combination of the four approaches above. The question is not whether these methods are popular. The question is whether they work.

Why these methods fail at enterprise scale

Each of these methods works well enough in a team of 20. None of them works at a workforce of 20,000. The failure is not gradual. It is structural.

The reliability problem

Dunning-Kruger research established that self-assessment correlates at about 0.29 with actual demonstrated competence. People who are weakest at a skill overestimate the most. People who are strongest underestimate. At enterprise scale, these errors do not cancel out. They compound.

Workera's analysis of skills assessment accuracy found that seven out of ten employees rate themselves inaccurately. When your workforce planning, internal mobility, and L&D decisions depend on this data, you are building on a foundation that is wrong 70% of the time.

The bottleneck problem

Manager evaluations depend on direct observation. But the average enterprise manager oversees work they may not fully understand. A VP of Engineering cannot reliably assess whether a data scientist's NLP skills are intermediate or advanced. A Head of Finance cannot evaluate cloud architecture competence. The evaluator's own expertise becomes the ceiling.

Gartner research shows that 48% of organisations say demand for new skills evolves faster than their existing structures can support. Managers are being asked to evaluate skills that did not exist when they built their own careers.

The decay problem

AI skills now have a half-life — the time it takes for something to lose about half its value — of roughly two years. But manual assessment projects, from taxonomy design to full workforce survey, take 18 months or more to complete.

The maths is unforgiving. By the time you finish assessing, a significant portion of the data is already outdated. You are not capturing the current state of your workforce. You are capturing a historical artifact.

The cost of making decisions on unreliable skills data

Bad skills data is not a theoretical problem. It has a measurable price tag.

$5.5T — Projected cost of skills gaps to the global economy by 2026. Source: IDC Future of Work research

That cost of the skills gap includes misallocated training budgets, failed internal mobility programmes, external hiring premiums, and workforce planning built on assumptions rather than evidence.

The costs show up in specific, recognisable ways. L&D teams invest millions in training programmes but because pre- and post-assessment data is unreliable, they cannot prove they closed actual skill gaps. Talent marketplace platforms launch with ambition but stall at 15% adoption, because employees cannot accurately describe their own skills and managers do not trust what they see.

Deloitte's skills-based organisation research found that only 10% of HR executives can effectively classify skills into a taxonomy. The other 90% are working with incomplete, inconsistent, or outdated skills data. When a CHRO presents workforce capability data to the board, they know, and the board suspects, that the numbers are soft.

Fosway Group research reinforces this: only 46% of organisations have a single enterprise-wide skills framework. The rest operate with fragmented, function-specific models that do not talk to each other. Skills data becomes siloed, inconsistent, and unusable for enterprise-level decisions.

From asking to observing: a different approach to skills assessment

The methods described above share a common assumption: to know what skills someone has, you need to ask them or someone who manages them. But there is an alternative: observe what people actually do.

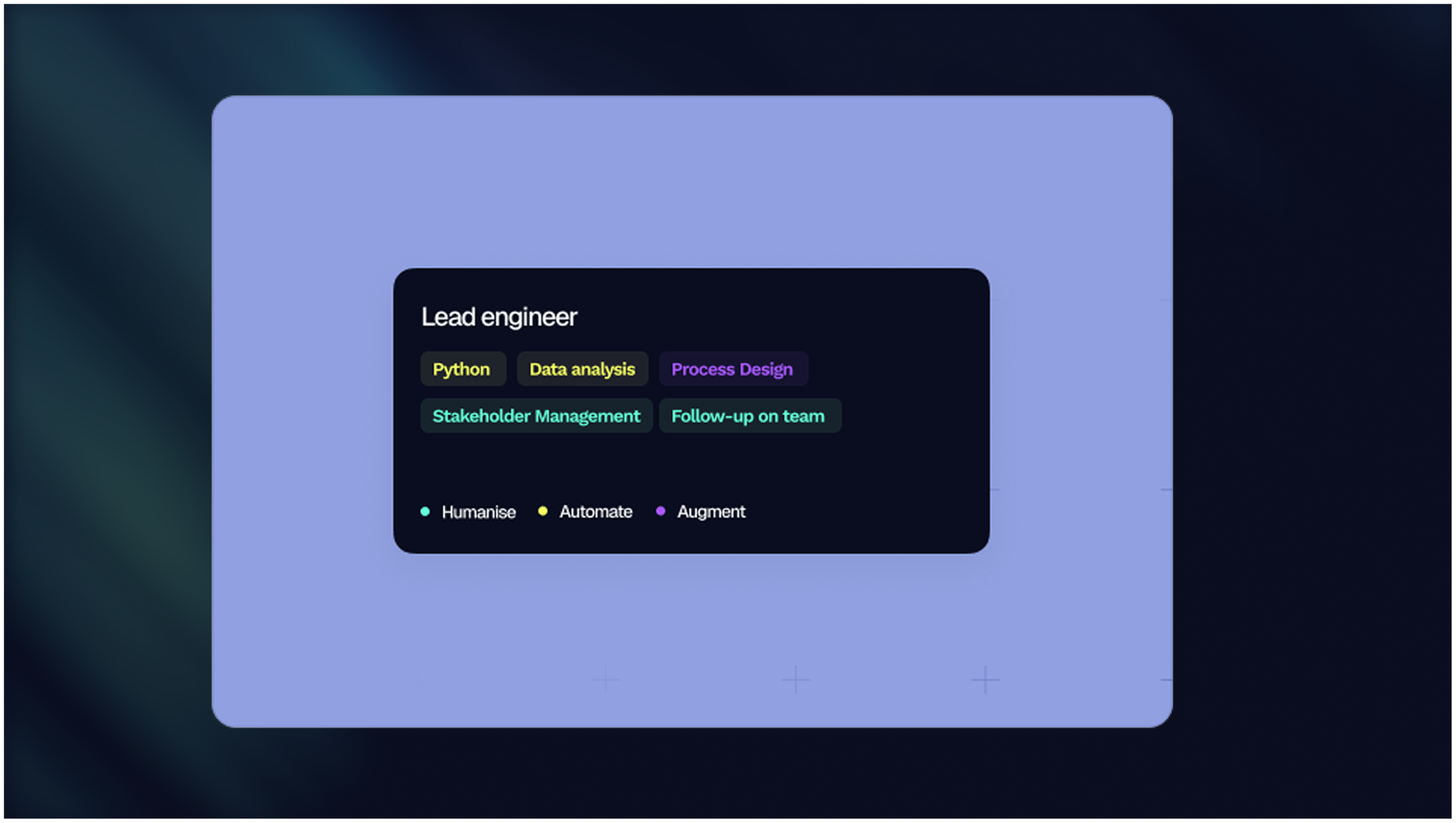

Skills inference is the process of identifying skills from existing work data rather than from self-reporting. Every organisation already generates rich signals about what their people do: job changes recorded in the HRIS, applications processed through the ATS, courses completed in the LMS, projects assigned, certifications earned. These signals reflect actual behaviour, not self-perception.

This approach addresses the structural failures of manual methods directly. It scales without surveys because the data already exists. It updates continuously, because work data changes in real time. It reflects what people actually do, not what they believe they can do. And it removes the manager bottleneck, because it does not depend on one person's observation of another.

The shift from asking to observing is not an incremental improvement on existing methods. It's a very different model or framework for conducting a skills assessment. Instead of starting with a taxonomy and asking people to map themselves to it, you start with work data and let the skills picture emerge from what people actually do.

The market is beginning to recognise this shift. Mercer's 2025 report shows that while only 8% of organisations currently use AI-driven methods, adoption is accelerating. In February 2026, Phenom acquired Be Applied specifically to add cognitive assessment capabilities to its talent platform. The direction is clear: the future of skills assessment is observation, not self-report.

The early results from organisations that have made this shift are striking. A global financial services firm with over 50,000 employees moved from annual self-assessment surveys to inference-based skills mapping. Data accuracy exceeded 90%. The data updated continuously. And the project took weeks, not the 18 months its previous taxonomy initiative had required.

What changes when skills assessment actually works

When skills data is reliable, every decision it feeds improves. Internal mobility moves from guesswork to matching. L&D investment connects to measurable skill gaps, not assumptions. Workforce planning runs on evidence the board can trust. Succession planning identifies candidates based on demonstrated capability, not manager impressions.

The gap between where most organisations are today and where the technology allows them to be is significant. Ninety-two percent are still relying on methods that produce data they know is unreliable. The 8% who have adopted AI-driven approaches are building a compounding advantage: better data leads to better decisions, which leads to better outcomes, which generates more data.

The first step is not buying new technology. It is acknowledging that the current approach is structurally broken. Not poorly executed. Structurally broken. The instinct that something is wrong with your skills data is correct. The fix is not a better survey. It is a fundamentally different way of seeing what your people can do.

Want to understand how skills data fits into a broader skills intelligence strategy?

Blog

From guides to whitepapers, we’ve got everything you need to master job-to-skill profiles.

The friction layer: Where structural AI pressure actually lands

From people analytics to workforce intelligence: Why banking needs a "data lake" for skills

.svg.png)